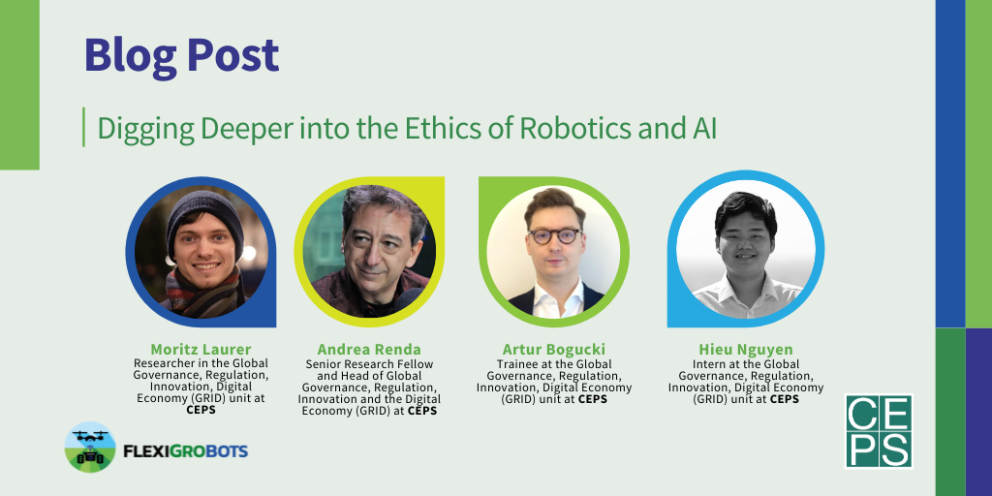

Written by: Moritz Laurer, Andrea Renda, Artur Bogucki, Hieu Nguyen (CEPS, Centre for European Policy Studies)

Anyone would want robots to be safe, AI to be trustworthy, technology to be sustainable. But what does this mean in practice? How should general ethical principles be applied in concrete use cases? What are the socio-economic and legal impacts of robotics and AI in agriculture and how can they be measured? These are the key questions for CEPS in FlexiGroBots.

Building upon existing public and private standards

Both technical experts in the private sector and regulators in the public sector have started answering these questions. In the private sector, model cards and datasheets have emerged as a practical tool for reporting on key properties of AI algorithms and the underlying training data (Google 2020); research groups have developed practical tools for measuring the CO2 emissions of algorithmic training (CodeCarbon 2021); others have developed tools for anonymising (personal) data more easily. In the public sector, the EU has led the way to a practical Assessment List for Trustworthy AI (ALTAI, HLEG 2020); the proposed AI Act regulation aims to make good data science practices legally binding for high-risk AI, and many other legal initiatives try to catch up with the opportunities and risks of new technologies.

In FlexiGroBots, we build upon these private and public standards to develop practical recommendations for our three pilots on autonomous robots in agriculture. We go deeper into the literature, conduct interviews with practitioners on the ground, distil existing best practices and adapt them to our specific use cases.

Digging deeper into the technical pilots

Close cooperation with technical partners and stakeholders on the ground is key for developing meaningful recommendations. We are therefore conducting a series of interviews with partners and stakeholders to better understand their use cases. This helps us understand the hidden ethical challenges and opportunities, socio-economic impacts and legal challenges.

The first months have already led to important insights. Interviews with practitioners have shown that classical questions of bias and fairness are harder to apply in agriculture, where plants are the primary object of analysis instead of people. At the same time, the physical safety dimension is particularly important when working with autonomous robot systems. Moreover, data protection becomes more important than one might expect, given the many camera systems necessary for autonomous robots.

Besides interviews, CEPS scanned 426 different publications for opportunities and challenges of robotics and AI and classified key arguments into challenges and opportunities from an ethical, legal, societal, economic and environmental perspective (‘ELSE’ factors). Figure 1. shows the dominant discourse in the literature: Economic and environmental impacts are seen more as opportunities, while ethical, legal and societal impacts are perceived as more challenging. Many researchers identify the potential for increasing productivity and several argue that technology can improve responses to environmental challenges like climate change. At the same time, many point out that new technologies entail important ethical challenges like transparency, clear legal frameworks are missing and the important social safety risks remain unsolved.

CEPS and our partners will continuously assess diverse ethical, legal, socio-economic and environmental risks and opportunities throughout the project and develop recommendations for our partners and beyond.

Developing recommendations and bringing technology and policy together

FlexiGroBots does not operate in a vacuum. It is our ambition to develop recommendations and tools which go beyond our particular use cases. We hope that our use of model cards and data sheets, for example, will inspire other organisations to adopt this tool for ethical and technical reporting. We plan on publishing templates to this end, which any organization can use. Moreover, we will closely analyse gaps and opportunities in the current legal frameworks and develop recommendations for policy makers based on our field experience in the pilots. Together with our partners, this will enable us to bridge the gap between academic research, technical implementation and regulation. Two exciting years lie ahead of the FlexiGroBots project – stay tuned.